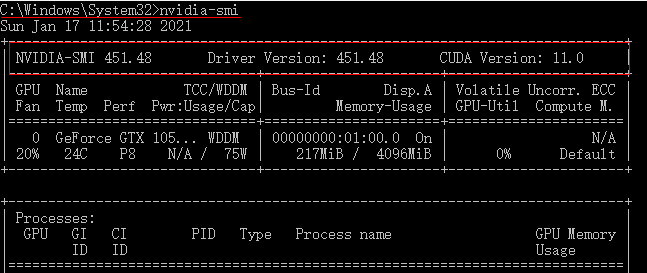

"/job:localhost/replica:0/task:0/device:GPU:1": Fully qualified name of the second GPU of your machine that is visible to TensorFlow."/GPU:0": Short-hand notation for the first GPU of your machine that is visible to TensorFlow."/device:CPU:0": The CPU of your machine.They are represented with string identifiers for example: TensorFlow supports running computations on a variety of types of devices, including CPU and GPU. If you would like to use Nvidia GPU with TensorRT, please make sure the missing libraries mentioned above are installed properly. 02:52:22.648933: W tensorflow/compiler/tf2tensorrt/utils/py_:38] TF-TRT Warning: Cannot dlopen some TensorRT libraries. 02:52:22.648924: W tensorflow/compiler/xla/stream_executor/platform/default/dso_:64] Could not load dynamic library 'libnvinfer_plugin.so.7' dlerror: libnvinfer_plugin.so.7: cannot open shared object file: No such file or directory 02:52:22.648829: W tensorflow/compiler/xla/stream_executor/platform/default/dso_:64] Could not load dynamic library 'libnvinfer.so.7' dlerror: libnvinfer.so.7: cannot open shared object file: No such file or directory Print("Num GPUs Available: ", len(tf.config.list_physical_devices('GPU')))

SetupĮnsure you have the latest TensorFlow gpu release installed. To learn how to debug performance issues for single and multi-GPU scenarios, see the Optimize TensorFlow GPU Performance guide. This guide is for users who have tried these approaches and found that they need fine-grained control of how TensorFlow uses the GPU. The simplest way to run on multiple GPUs, on one or many machines, is using Distribution Strategies. Note: Use tf.config.list_physical_devices('GPU') to confirm that TensorFlow is using the GPU. TensorFlow code, and tf.keras models will transparently run on a single GPU with no code changes required.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed